OpenTelemetry · OTLP · Structured Logs · AI SDLC

OpenTelemetry Log Analysis —

Distill the OTLP Signal That Matters for AI-Generated Code.

Your OTel backend stores everything. That’s the job. But auto-instrumentation means more haystack and less needle, and dashboards weren’t built for the AI SDLC cadence. Fan your OTLP logs to Dstl8 — one more exporter, nothing to rip out — and get a distilled signal layer Möbius reads for you.

43%

OF AI-GENERATED CODE FAILS IN PROD WITHOUT MANUAL DEBUGGING (LIGHTRUN 2026)

77%

OF ENG LEADERS LACK CONFIDENCE IN OBSERVABILITY FOR AI OPS

80%

OF LOGS AT LARGE COMPANIES NEVER GET READ

1

OTLP EXPORTER TO ADD, NOTHING TO RIP OUT

2 min

FROM brew install gonzo TO FIRST USABLE STREAM

FIVE FAILURE MODES

Five Ways Your OTel Backend Stores Everything and Surfaces Nothing.

OpenTelemetry is the right standard. Your backend ingests the logs. The gap isn’t collection — it’s what happens when auto-instrumentation turns every HTTP call into a log line, when AI-generated code ships hourly, and when the question becomes “what’s new since the last deploy” instead of “what’s our p99.” These are the patterns Dstl8 is built to complement.

01

Auto-instrumentation gives you the haystack, not the needle

OTel auto-instrumentation is the point. Every HTTP call, DB query, middleware hop, and framework callback becomes a structured log event. Your backend ingests all of it, faithfully. None of it tells you which line is the incident.

# auto-instrumented service, average day

HTTP requests: 1.2M logs

DB queries: 3.8M logs

framework callbacks: 7.4M logs

# search: “error” → 40,000 results

# actual incidents: 3

02

DEBUG vs production is a no-win static config

Leave DEBUG on in prod: storage bill spikes, real signals drown. Turn it off: you’re blind when the AI-generated deploy breaks something subtle. Dynamic per-request filtering exists in theory. In practice, nobody has engineering budget to maintain it.

DEBUG off: blind in incident

DEBUG on: $thousands/mo extra, signal drowns

dynamic: weeks of collector engineering

# every team picks one of the three losing options

03

Your backend stores everything. It doesn’t know what’s new.

Loki, Datadog, New Relic, SigNoz, Elastic — they’re designed for search, aggregation, and long-term retention. They do that job well. What they don’t do is notice when a pattern that wasn’t there before appears, because they were built for an era of human-scale deploys. The AI SDLC has a different cadence.

# what your backend answers well

“show me error rate over 30 days”

“p99 latency for checkout-api”

“logs matching trace_id=abc”

# what it doesn’t answer

“what pattern showed up in the last hour that wasn’t there before?”

04

Dashboards live in tabs. AI-generated code lives in the editor.

The developer reviewing the patch Claude Code or Cursor just wrote doesn’t want to context-switch to Grafana to check if it’s misbehaving. The feedback loop breaks at the tab boundary.

[editor] Claude Code: “shipped fix for issue #1423”

[editor] developer: accept?

[???] is the staging environment happy?

[tab] → open dashboard

[tab] → filter by service

[tab] → scan for anomalies

[editor] flow: gone

05

“Unknown unknowns” are what AI-written code breaks

The failures you have alerts for are the failures you already knew about. AI-generated code introduces new failure modes, and by definition you haven’t built a dashboard for them yet. Your existing backend can search for them — once you know what to search for.

known failures: alerts configured ✓

known patterns: dashboards built ✓

new pattern from AI-written code: silent until someone notices

# observability science: find unknown unknowns

# traditional backend: find known knowns faster

WHY THIS MATTERS

OpenTelemetry solved the open instrumentation and transport problem. Your backend solved the storage and search problem. Neither was designed for the question that matters most right now: what did the AI-generated code in the last deploy actually do? Dstl8 is the distillation and signal layer that answers that question, sitting alongside what you already have.

The solution

How Engineering Teams Get Signal from OTLP Logs Without Replacing Anything.

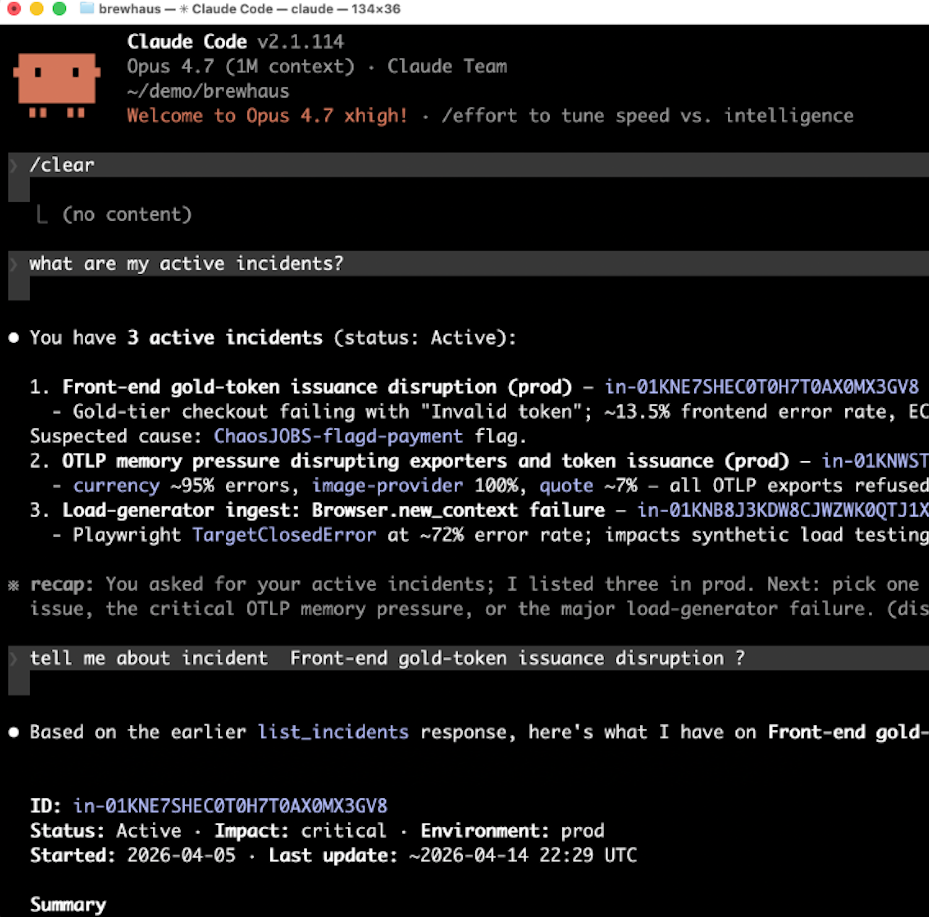

Fan your OTLP logs to Dstl8. Keep your existing backend exactly as it is. Möbius reads the distilled signal — pattern clusters, anomalies, ranked incidents — and answers questions from Claude Code, Cursor, or your terminal. Nothing to rip out.

One more OTLP exporter, nothing to rip out

Dstl8 accepts OTLP logs natively. Add a Dstl8 exporter alongside your existing one and fan your log stream out. Your Loki, Datadog, or SigNoz setup stays exactly as it is, as the system of record for search and retention.

Distillation in front of Möbius, not raw logs

Dstl8 doesn’t just index logs — it clusters by pattern, scores sentiment, tracks severity and volume over time, and flags anomalies against each service’s baseline. Möbius reads that distilled signal and surfaces what’s new or worth attention, ranked by impact.

Pattern-emergence detection for the AI SDLC cadence

“What’s new since the last deploy” is the default question Dstl8 is built around. When Claude Code ships a change, Dstl8 sees the pattern emerge in staging or prod before your on-call does.

DEBUG noise collapses into clustered signal via MCP

The AI agent that wrote the code can ask Dstl8 what the code just did. Grounded in live OTLP logs, answered in the editor, no tab-switching.

Query incidents from Claude Code, Cursor, or your terminal

Leave DEBUG on where it’s useful. Dstl8 clusters repeated patterns into single events, so a 10,000-line storm becomes one row you can ignore — and Möbius surfaces the anomalous DEBUG spike because it’s anomalous, not because a threshold fired.

What you get

How Teams Distill OTLP Signal Without Replacing Their Backend.

MÖBIUS

Distilled signal, with receipts.

Möbius doesn’t wade through raw OTLP output. Dstl8 distills your logs first — clustering by pattern, scoring sentiment, tracking severity and volume over time, and flagging anomalies against each service’s baseline. Möbius reads that signal and groups related anomalies into incidents, ranked by impact. Every claim links back to the underlying log evidence.

COMPLEMENT

Keep your backend. Add the signal layer.

1 OTLP exporter to add

Dstl8 is not a replacement for Loki, Datadog, New Relic, Honeycomb, or SigNoz. It sits alongside. Fan your OTLP log stream to Dstl8 and your existing backend at the same time. Search and long-term retention stay where they are. Signal and pattern emergence come from Dstl8.

UNKNOWN UNKNOWNS

Find what you didn’t know to alert on.

Your existing backend is great at finding known patterns fast. Dstl8’s distillation-first architecture surfaces pattern changes — the delta from baseline — without a pre-defined rule. That’s the class of failure AI-generated code tends to produce: new, unexpected, and not covered by last quarter’s dashboards.

AI SDLC

Built for the deploy cadence AI tools create.

Claude Code, Cursor, and Copilot are shipping more code, faster, than your observability stack was designed for. Dstl8 runs continuously against your log stream, notices when patterns emerge or shift, and surfaces them in the editor where the next change is being written.

DEBUG

Leave DEBUG on where it’s useful.

Static log-level config is a compromise every team loses at. Dstl8 collapses high-volume repeated patterns — including DEBUG storms — into clustered signals. The noise becomes one row. The anomalous DEBUG spike still gets surfaced, because Dstl8 is watching the delta from baseline, not a threshold you tuned last quarter.

Get Started

Terminal-native OTLP receiver before you commit to a bigger workflow.

2 min to first usable stream

Run Gonzo as an OTLP receiver on your laptop, point your collector or SDK exporter at it, and see OTLP logs stream in your terminal with local-zone timestamps, flexible filters, and AI-powered summarization. Bring in Dstl8 when you want Möbius doing the proactive pattern detection, cross-service correlation, and in-editor Q&A against your full log surface.

Complement, not replace

OpenTelemetry Log Analysis: Where Dstl8 Fits Alongside Your Backend.

Capability

Store and search OTLP logs

Long-term retention and compliance

Pattern and anomaly detection across services

Proactive “what’s new since the last deploy”

Clustered collapse of high-volume noise

Query incidents from Claude Code / Cursor / terminal

YOUR EXISTING OTEL BACKEND

GENERIC LOG SEARCH

ControlTheory

Common questions

OpenTelemetry Log Analysis — Questions from Engineering Teams.

Get started

Start With Gonzo in Under 2 Minutes.

Open source terminal UI. No account, no agent, no configuration. Run Gonzo as an OTLP receiver and see your OpenTelemetry logs stream in the terminal before you commit to a bigger workflow.

Install Gonzo

Gonzo is the open source log analysis TUI that powers ControlTheory’s free tier. It tails your log streams, surfaces patterns by severity, and sends individual entries to an LLM for explanation — all from your terminal. With –otlp-enabled, Gonzo becomes a native OTLP receiver, accepting logs over gRPC (port 4317) and HTTP (port 4318) from any OTel Collector or SDK exporter. No config files, no cloud account, no agents.

brew install gonzo

go install github.com/control-theory/gonzo/cmd/gonzo@latest

# Download the latest release for your platform from the releases page: # github.com/control-theory/gonzo/releases

nix run github:control-theory/gonzo

git clone https://github.com/control-theory/gonzo.git cd gonzo make build

Run Gonzo as an OTLP receiver:

gonzo –otlp-enabled

# Gonzo now accepts OTLP over gRPC on port 4317

# and HTTP on port 4318.

# Point any OTLP-compatible exporter at it.

Run Gonzo as an OTLP receiver:

exporters:

otlp/gonzo:

endpoint: localhost:4317

tls:

insecure: true

service:

pipelines:

logs:

exporters: [otlp/gonzo

Full OTLP usage guide including HTTP, custom ports, and SDK examples: Gonzo OpenTelemetry docs.

Your OTel backend stores the logs. Dstl8 distills the signal.

Free account. One OTLP exporter to add, nothing to rip out. Möbius reads your distilled log stream so you don’t have to. Early access to Dstl8 — no credit card, no sales call.

Related pages

More for AI Code Generation Reliability.

Keep Your OTel Backend.

Add the Signal Layer.

Fan your OTLP logs to Dstl8 and let Möbius surface the pattern changes your dashboard can’t see. Query from Claude Code, Cursor, or your terminal. Nothing to rip out.