Claude Code · MCP · Skills · Agent Workflows · CI/CD

Claude Code Closed the Loop to Commit.

Dstl8 Closes the Runtime Feedback Loop.

Claude Code runs as an autonomous agent. It decides the implementation, edits dozens of files, runs tests, commits, and reports back. The diff is too big to review line by line. The runtime is the one place the agent cannot see. Dstl8 reads the logs, finds the pattern, names the cause, and hands it to Claude Code through two native entry points: an MCP server for direct runtime queries and a Claude Code Skill that teaches the agent the orchestration. Every prompt is grounded in what actually happened in production, not in what the tests said.

4–8 hrs

MANUAL TIME TO TRACE AGENT-SHIPPED FAILURE TO ROOT CAUSE

15–30 min

SAME INCIDENT WITH MÖBIUS-LED ANALYSIS

1

INCIDENT VIEW ACROSS EVERY FILE THE SESSION TOUCHED

90 days

AMAZON’S AI-ASSISTED CODE SAFETY RESET

2 min

ROM brew install gonzo TO FIRST USABLE STREAM

Six failure modes

Ways Claude Code Sessions Break at Runtime.

Claude Code closed the loop from prompt to commit. It left the loop from commit to runtime wide open. These six failure modes are structural. They come with agent-led development. They do not get patched by a better test or a stricter reviewer. What changes is whether you find them before your users do.

01

The diff is too big to review line by line

One Claude Code session can touch dozens of files, rename symbols across modules, and commit the result. The session summary says “done.” The diff lands as one PR shaped by forty intermediate decisions you never reviewed. A human reviewer reading it as a normal code review is already outside the design of the workflow.

# one session

files touched: 47

symbols renamed: 12

tools called: 134

intermediate edits: ~280

final commit: 1

reviewer sees: 1 diff

decisions audited: ???

02

“Tests passing” is a claim, not a runtime fact

The session ends with all tests green and a summary of what shipped. Tests pass on the fixtures the agent had in context. Real traffic shapes, real third-party API responses, and the RLS policies that only apply in production are not in that context. “Done” is a state the agent declares. Production is what decides.

# session output

✓ 147 tests passing

✓ lint clean

✓ committed 2e8b4a1

✓ “Implementation complete. Ready for review.”

# production, 40 minutes later

TypeError: Cannot read properties of undefined (‘metadata’)

at webhook.js:47

customer.subscription.updated · 22 occurrences

03

The failed attempts that shaped the commit don’t appear anywhere

Claude Code iterates: runs bash, reads output, edits a file, runs tests, retries, edits again, commits. What lands is the final state. The reasoning, the approaches tried and abandoned, the assumption made in attempt three that carried into attempt eight, none of that is in the Git history. The log trail stops at the end state.

# what git log –oneline shows

2e8b4a1 fix checkout edge case in stripe webhook

# what happened inside the session

attempt 1: read schema, edit webhook.js (tests: 2 failing)

attempt 3: assumed metadata.userId on all events (tests: 1 failing)

attempt 5: added try/catch, swallowed error (tests: passing)

attempt 7: refactored around the catch (tests: passing)

commit: final state only

04

Subagent spawning means parallel contexts converging into one merge

When Claude Code delegates subtasks to subagents, code generation becomes parallel. Multiple independent reasoning streams edit different parts of the codebase, then merge into a single commit. Each subagent had its own context, its own assumptions, its own read of what the task required. Tracing which stream introduced a runtime failure is a puzzle the Git diff is not structured to answer.

main agent

├── subagent-1: update API schema (context A)

├── subagent-2: update client code (context B)

└── subagent-3: update integration tests (context C)

→ merge

→ one commit

→ one diff

# at runtime: the schema and the client disagree on optional fields

# which context had the right assumption? the diff does not say.

05

Claude Code in CI merges without a human reading the diff

Teams wire Claude Code into pipelines for the obvious reason: velocity. The trade is that code gets generated, tested, and merged while nobody is watching. When something breaks overnight, the on-call engineer is debugging code no human ever read. Amazon’s response to this pattern (the 90-day safety reset, mandatory senior sign-off on AI-assisted code changes, new deterministic deploy guardrails) was not an overreaction. It was a recognition that CI had compressed review out of the loop.

# ci pipeline

trigger: issue labeled “auto-fix”

runner: claude-code-bot

review: none

merge policy: all tests green → merge main

deploy: auto on merge

# saturday 02:14

deploy shipped

silent 500s begin

first complaint: monday 09:00

# who reviewed the code? nobody.

06

The runtime context Claude Code needs to fix it right, it never sees

You ask Claude Code to fix a bug. It reads the tests, reads the source, writes a fix, reruns the tests, commits. The one thing it never reads is the production stack trace, the correlated incident, or the actual call pattern that produced the failure. The agent is prompted against the codebase. It is not prompted against the runtime. Every fix is a guess informed by what the tests said, not by what production did.

# what the agent sees when you say “fix the bug”

→ source files

→ test files

→ recent commits

→ error message you pasted in

# what the agent does not see

✗ the incident pattern across the last 6 hours

✗ which specific event types triggered the failure

✗ the deploy that shipped the regression

✗ the correlated infrastructure events

✗ what’s actually happening in runtime right now

# the fix is against half the picture.

Why this matters

Claude Code did not break the SDLC. It compressed it. Writing, testing, and shipping happen inside one session. The one thing it cannot compress is runtime. The agent ships into a blind spot, and the next prompt is written without the evidence that would have made the fix better. The problem is not that Claude Code is unreliable. The problem is that the feedback loop it needs to improve its own output is not closed.

The solution

How Claude Code Teams Close the Runtime Feedback Loop.

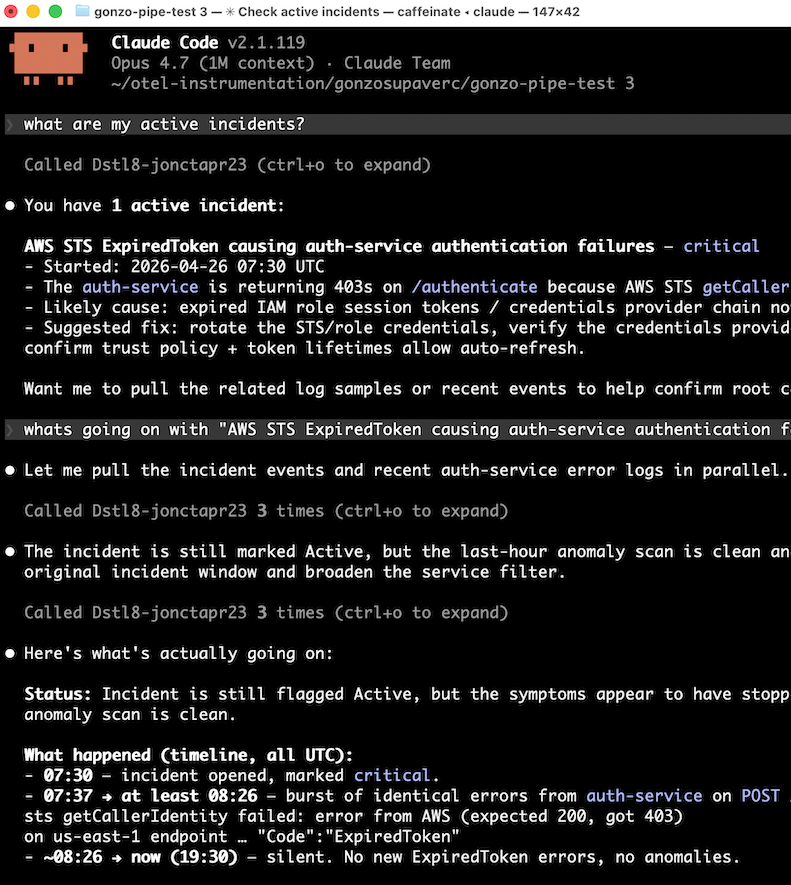

The goal is not another dashboard for reading isolated logs. The goal is an AI agent that watches the runtime Claude Code cannot, correlates what it sees, names the cause, and makes that answer available inside Claude Code as context for the next prompt. Möbius does the first hour of triage before you sit down. MCP and the Dstl8 Claude Code Skill make that triage something Claude Code itself can do.

Möbius watches the runtime Claude Code cannot

Dstl8’s AI agent monitors your live log streams for emerging failure patterns after every agent-shipped commit. When a session ends “done” but runtime disagrees, Möbius surfaces the pattern in minutes, not in tickets filed three days later.

One incident view for the forty files the session touched

When a single Claude Code session edits dozens of files, Dstl8 flattens the failure signal into one incident and points to the specific code path that produced it. You stop bisecting forty files and start on the fix.

Root cause narrative with named evidence

Möbius does not just group errors. It writes a short diagnosis: what the session shipped, what broke in runtime, which signals confirm it, and the first thing to try. Every claim links back to the underlying log entries.

Two native Claude Code entry points: MCP server and the Dstl8 Skill

Dstl8 exposes an MCP server for direct runtime queries and ships a Claude Code Skill that teaches the agent how to use it. MCP gives Claude Code the raw tools (incidents, patterns, anomalies, knowledge graph). The Skill gives it the playbook: a 7-step triage flow, deploy verification, cross-environment correlation. Install one, both, or switch between them depending on the session.

Feed runtime context back into the next prompt

The incident, the root cause, the correlated deploy event, and the affected code paths become structured context Claude Code uses directly. The agent stops fixing what the tests said was broken and starts fixing what the runtime actually showed.

What you get

How Claude Code Teams Catch Runtime Failures the Agent Can’t See.

MÖBIUS

Proactive root cause analysis, with receipts.

Möbius watches your runtime continuously, clusters related failures into incidents, names the likely cause, and shows the exact log lines that support the diagnosis. You walk into the investigation with the answer already drafted. Ask follow-ups from Claude Code or your terminal without switching contexts.

MCP

Ask Dstl8 about runtime from inside Claude Code.

Dstl8 exposes an MCP server. Connect it in Claude Code’s MCP config and the agent can query your live runtime surface during any session: incidents, patterns, anomalies, the knowledge graph. “What’s the current error pattern on checkout-flow,” “show me the last incident’s root cause,” “was the most recent deploy clean.” Every answer is structured data, grounded in your actual runtime.

SKILL

The Claude Code Skill that knows the Dstl8 playbook.

The Dstl8 Skill teaches Claude Code runtime orchestration: 7-step incident triage, cross-environment correlation (local to staging to production), deploy verification, knowledge graph writes. One npx skills add control-theory/dstl8-skill command and the agent picks up the workflows without you having to prompt them. Use alongside the MCP server, or use the MCP server alone if you have your own orchestration patterns.

BLAST RADIUS

Faster answers when “which file did the agent break” is the symptom, not the cause.

1 incident view across every file the session touched

The question stops being “which of the 47 files in the diff introduced the error?” and becomes “what pattern is Möbius seeing, and in which code path?” Dstl8 correlates the runtime failure to the session that shipped it and ranks what is actually broken.

CROSS-SESSION

One incident list across every agent session your team ships.

You don’t need another isolated log viewer. You need one list of active incidents, grouped by related signal, ranked by impact, with enough context to tell whether the failure originated in a single engineer’s session or a CI-triggered agent run. Möbius does that ranking continuously, so the first thing you see is the thing worth fixing.

CI

Runtime gate for autonomous shipping.

When Claude Code merges in CI, there is no human reading the diff. Dstl8 is the runtime-side replacement: always-on pattern detection that flags the moment a newly-shipped commit behaves differently in production, with Möbius-authored root cause the moment the signal is conclusive. You keep the velocity of CI and get the assurance a senior reviewer would have given you.

Gonzo

Start with terminal-native streaming in the same terminal Claude Code runs in.

2 min to first usable stream

Use Gonzo to tail Vercel, Railway, Supabase, AWS, or any log source that writes to stdout or an API. Timestamps in your local zone, flexible filters, and AI-powered summarization on demand. Bring in Dstl8 when you want Möbius doing the proactive detection, cross-session correlation, and in-editor Q&A against your full runtime surface.

Comparison framing

Claude Code in Production: Your Options.

Capability

Catches runtime failure from agent-shipped commit

Correlates failure to the session that shipped it

Surfaces intermediate agent decisions

Runtime evidence inside the next Claude Code prompt

Ranked incidents by real user impact

Cross-session pattern detection across the team

MANUAL REVIEW

AI CODING TEAMS TODAY

ControlTheory

Common questions

Claude Code Runtime Feedback — Questions from Engineering Teams.

Get started

Start With Gonzo in Under 2 Minutes.

Open source terminal UI. No account, no agent, no configuration. Tail your runtime logs in the same terminal Claude Code runs in.

Install Gonzo

Gonzo is the open source log analysis TUI that powers ControlTheory’s free tier. It tails your log streams, surfaces patterns by severity, and sends individual entries to an LLM for explanation — all from your terminal. No config, no cloud account, no agents. It’s the fastest way to start seeing what your Cursor-generated code is doing in production.

brew install gonzo

go install github.com/control-theory/gonzo/cmd/gonzo@latest

# Download the latest release for your platform from the releases page: # github.com/control-theory/gonzo/releases

nix run github:control-theory/gonzo

git clone https://github.com/control-theory/gonzo.git cd gonzo make build

Tail your runtime logs:

# Vercel

vercel logs –follow –output json | gonzo

# Railway

railway logs –service api | gonzo

# Any stdout source

tail -f app.log | gonzo

Then connect Claude Code to Dstl8, two ways:

# MCP server — direct runtime queries

Add the Dstl8 MCP server to your Claude Code MCP config.

claude mcp add –transport http Dstl8 –scope user https://{ORG_ID}.app.dstl8.ai/mcp –header “Authorization: Bearer {DSTL8_TOKEN}”

# Dstl8 Skill — runtime orchestration playbook

npx skills add control-theory/dstl8-skill

Use either, use both. The MCP server gives the agent the tools. The Skill gives it the playbook.

Stop Meeting Your Code in Production. Let Dstl8 Close the Loop.

Stream logs with Gonzo. Let Möbius detect, correlate, and diagnose inside Dstl8. Bring runtime into Claude Code through the MCP server, the Dstl8 Skill, or both. Every prompt is grounded in what actually happened in production, not in what the agent assumed.

Related pages

More for AI code generation reliability.

Stop Meeting Your Code in Production.

Let Dstl8 Close the Loop.

Stream logs with Gonzo. Let Möbius detect, correlate, and diagnose inside Dstl8. Bring runtime into Claude Code through the MCP server, the Dstl8 Skill, or both.