GitHub Copilot Speeds Up Delivery. Now Debug AI-Generated Failures Fast.

GitHub autocomplete, GitHub Copilot code review, and GitHub Spark move code from prompt to pull request fast. Production is where the confidence gap shows up. Find root cause fast, improve code quality, and debug AI code before bugs in code turn into outages.

Four failure modes

Four Ways GitHub Copilot Code Fails After It Looks Done.

GitHub Copilot code often passes the vibe check before it passes production reality. The common failure is not syntax. It is hidden assumptions about data, auth, environment, and edge cases. That is where ai code risk shows up.

Autocomplete matched the local context, not the live response shape

GitHub autocomplete is optimized to continue what looks plausible in the editor. Real APIs return nullable fields, different types, missing keys, or plan-specific payloads. The generated branch merges cleanly. The first production request is where the mismatch appears.

Copilot can propose a fix. It cannot guarantee the failure mode is the one you selected

Slash commands like /fix are useful for fast iteration, but they operate on the code and context you provide in the chat. If the real root cause lives in a missing environment variable, a hidden retry path, a stale remote, or an auth boundary, the patch can look reasonable while missing the actual incident.

GitHub Spark and AI app builders compress build time, not debugging time

GitHub Spark can generate a full-stack app with storage, AI features, GitHub auth, and one-click deployment. That reduces setup work dramatically. It does not remove the need for ai code analysis once live traffic, auth scopes, or data mutations hit paths the prototype never exercised.

Legacy code makes AI code assistants reliability worse, not better, when your mental model is already thin

AI code assistants reliability for legacy code drops when the repository has partial types, undocumented side effects, historical naming, and weak observability. Copilot can still write plausible edits and code review summaries. But when the incident lands, you are debugging logic nobody on the team fully reconstructed.

The productivity gain is real. So is the verification gap. Research and field reports point in the same direction: AI-generated code can move faster while still carrying correctness, security, and runtime reliability risk that only appears after merge or deploy.

The solution

How Teams Debug GitHub Copilot Bugs Without Guessing.

The goal is not to stop using Copilot. The goal is to make ai code debug reliable when GitHub Copilot code review, GitHub Spark, and autocomplete accelerate more changes than humans can manually reason through.

See the runtime mismatch before it becomes a support thread

Whether it is how to fix GitHub code, how to fix GitHub Pages, or a GitHub Spark AI prototype that breaks on real auth, the live signal tells you which assumption failed.

Separate platform errors from application bugs

GitHub repository not found error fix, GitHub 403 error push repository fix, and GitHub Pages 404s often look like app regressions until you correlate remote config, auth, deploy events, and code paths in one place.

Turn AI code analysis into an answer, not another prompt

Use Copilot to generate and revise code. Use production telemetry to determine what actually failed. That is how you improve code quality assurance instead of adding more speculative patches.

Debug across app, deploy, auth, and repository events

One incident often spans several layers: Copilot-generated handler, GitHub auth, Pages publish source, remote URL, token scope, and downstream API behavior. Root cause needs all of it.

Know when to pause autocomplete and inspect reality

Sometimes the fastest fix is to turn off Copilot autocomplete, reproduce the failing path, inspect the logs, and re-enable assistance only after the team understands what the system is actually doing.

What you get

What GitHub Copilot Debugging Looks Like When It Works.

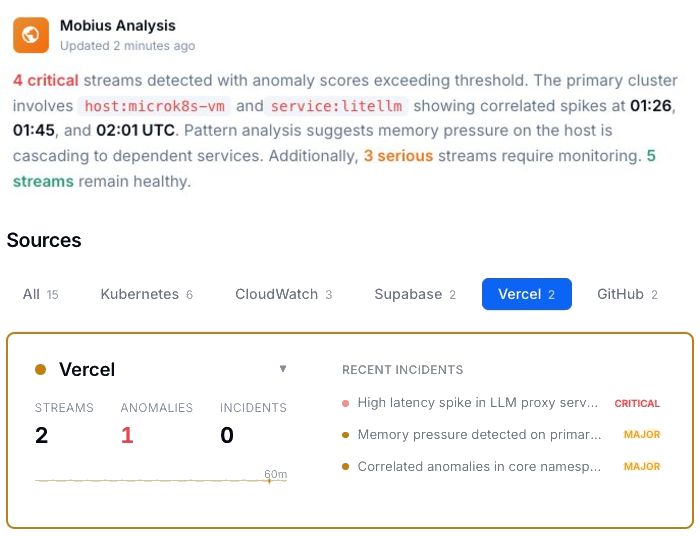

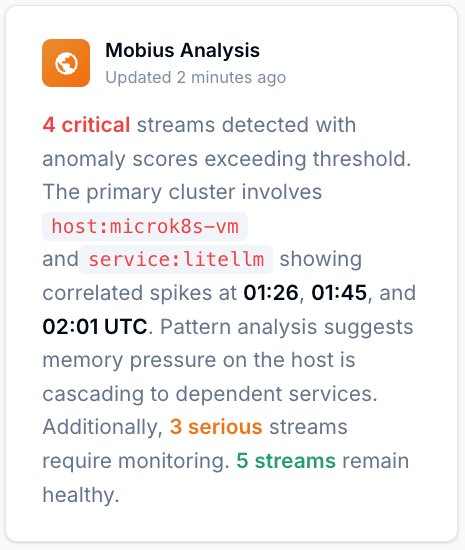

See which AI-generated failures are real, which are noisy, and which are spreading.

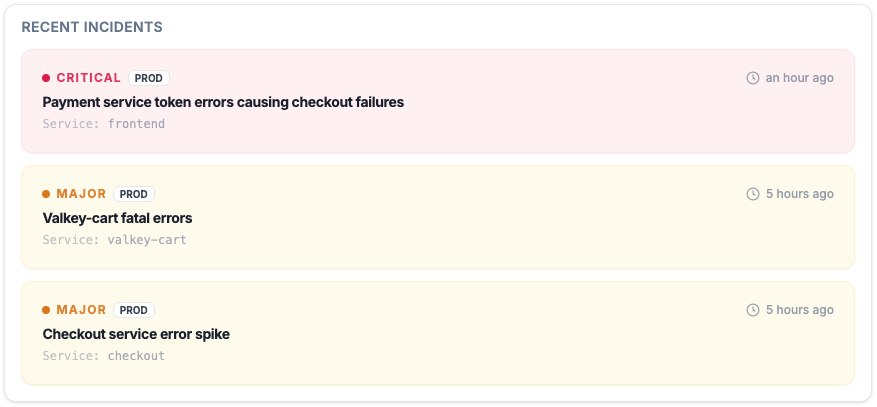

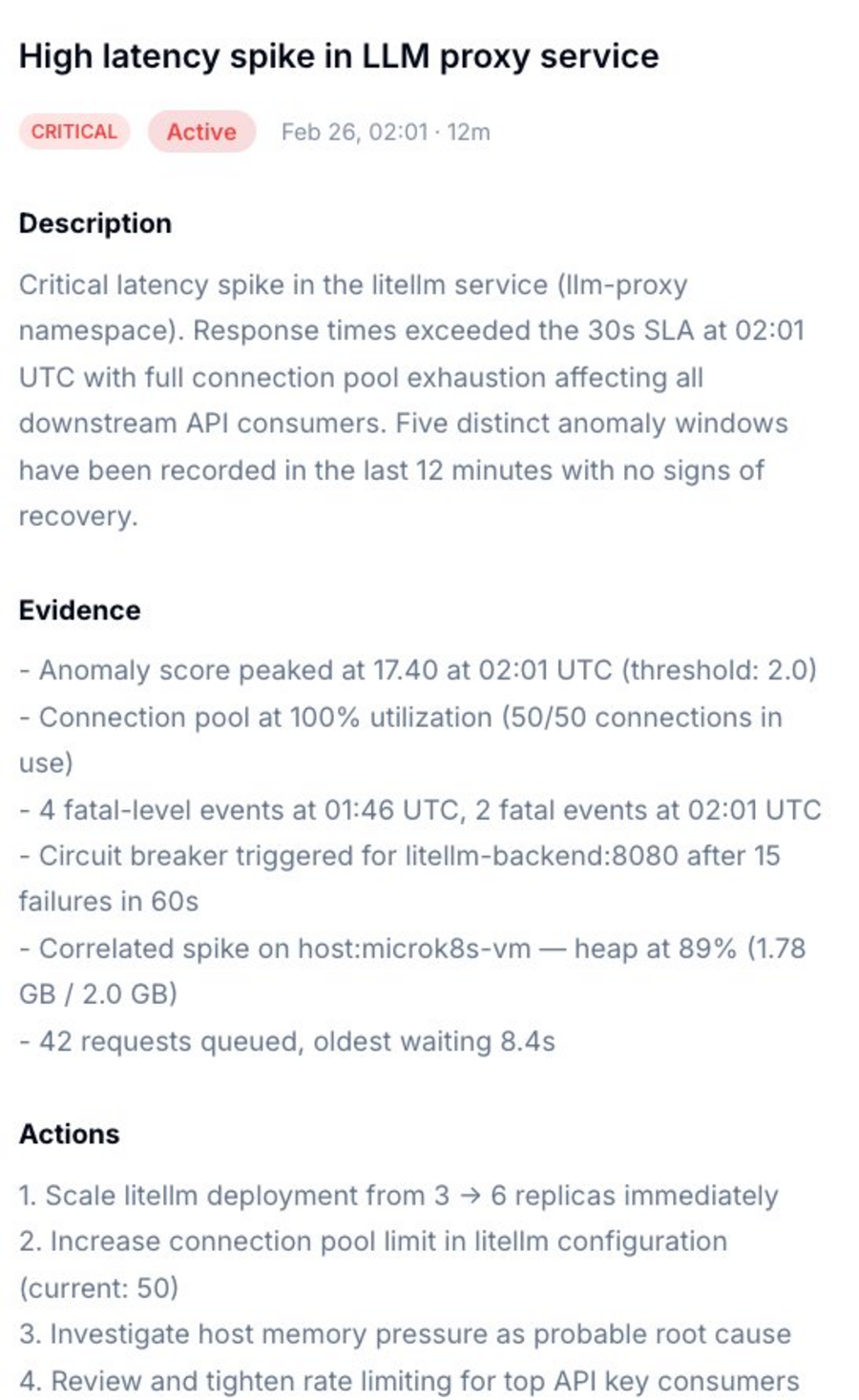

Instead of digging through scattered logs after a github copilot bug lands, you get a prioritized incident view with timestamps, severity, and evidence connected to the runtime.

Improve code quality without slowing down authorship.

AI-assisted output can be productive and still carry security and reliability debt. Code quality assurance starts after generation, not before it.

Root cause, evidence, and next actions in one place.

Get a diagnosis that tells you whether the failure belongs to auth, data shape, environment drift, missing publish artifact, or an actual code path regression from GitHub Copilot code.

Ask what changed, what broke, and what is correlated.

Natural language over real telemetry. Use it to investigate github copilot code, github spark copilot apps, or legacy services touched by AI suggestions without starting from a blank terminal.

Start with Gonzo — free, open source, 2 minutes.

Use Gonzo to inspect what your AI-generated code is doing in production before you ask for another fix, another review, or another autocomplete.

Full vibe stack debugging

Debugging GitHub Copilot Code: Your Options.

| Capability | Manual | Copilot-Only Workflow | ControlTheory |

|---|---|---|---|

| Catch runtime mismatches hidden by github autocomplete | ✗ after deploy | ✗ prompt-dependent | ✓ behavior-first |

| Separate GitHub Pages, auth, and repository errors from app bugs | ✗ manual triage | ✗ fragmented context | ✓ |

| Use /fix on top of verified incident evidence | ✗ | ✗ patch-first | ✓ evidence-first |

| Cross-service pattern detection for ai code risk | ✗ | ✗ | ✓ emergent |

| Improve code quality with production feedback loops | ✗ slow | ✗ subjective | ✓ |

| Time to first insight | Hours | Prompt by prompt | 2 minutes |

Common questions

GitHub Copilot Debugging — Questions from Engineering Teams.

Why does GitHub Copilot code look right and still fail in production?

Because the model is optimizing for plausible continuation, not proof of runtime correctness. GitHub Copilot can generate useful code suggestions, fixes, tests, and explanations, but the failure often lives in live data, auth scopes, repository permissions, missing environment assumptions, or edge cases outside the local context.

Can I use VS Code GitHub Copilot slash commands like /fix to debug AI code?

Yes — and they are often useful. GitHub documents /fix as a common slash command for proposing fixes to selected code. The limitation is that slash commands work on the context you give them. They are strongest after you already know the failing behavior, not before.

How do I turn off Copilot autocomplete in the middle of an incident?

GitHub documents that you can enable or disable Copilot from within supported IDEs, including VS Code and JetBrains, and keyboard shortcuts docs also expose a toggle command for VS Code. Teams often disable completions temporarily when they need to inspect a failing path without more suggestions competing for attention.

What does GitHub Spark change about debugging?

GitHub Spark makes it easier to build and deploy full-stack apps with natural language, visual tools, or code. That compresses setup and prototyping. It does not remove the need to verify how the app behaves under real auth, data, and runtime conditions after publish.

How do I fix GitHub Pages 404s, repository not found, or 403 push errors when AI-generated code seems fine?

Start by checking the platform path before changing application code. GitHub docs point to common causes such as a missing or mis-cased index.html at the publish source for Pages, a wrong remote URL or missing permissions for repository-not-found errors, and token-based authentication or SSO authorization issues for HTTPS 403s. These are exactly the kinds of failures that can masquerade as code bugs if you only inspect the generated diff.

Get started

Start With Gonzo in Under 2 Minutes.

Open source terminal UI. No account, no agent, no configuration. Run it next to GitHub Copilot in VS Code and inspect the runtime before you approve the next AI-generated patch.

Install Gonzo

Gonzo is the open source log analysis TUI that powers ControlTheory’s free tier. It gives teams a fast path to ai code analysis, debugging ai code, and code quality assurance without rewriting their whole workflow. Use it when github copilot code review says “looks good” and production still says otherwise.

Connect to your platform

Use GitHub Copilot. Debug it with confidence.

Free account. Gonzo running against your production logs in 2 minutes. Early access to Dstl8. No credit card, no sales call.

No credit card · no sales call · no drip sequence

Related pages